Intelligence is the new steel

Intelligence is a commodity, and we should start treating it like one

Given the impending rise of AI agents, it makes more sense to think of intelligence as a commodity, like steel or grain.

Thinking of intelligence as a commodity is better because it is both:

A more accurate way of thinking about intelligence and

A model that is better for humanity

For the purposes of this essay, I’m defining “intelligence” as “the capacity to accomplish goals”1. This capability is becoming increasingly interchangeable and replaceable, and it is worth considering the implications of that change.

It is more accurate to think of intelligence as a commodity

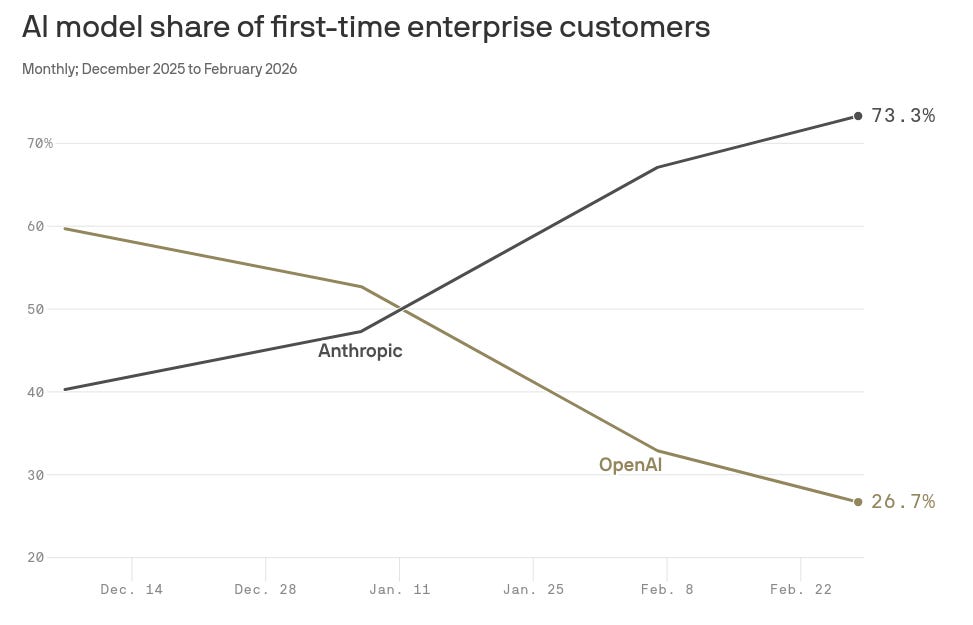

The dramatic shift from OpenAI usage to Anthropic usage over the past few months is a pretty clear indication that, at least today, the preferred model provider can change very quickly:

Models also seem to have similar capabilities and rates of advancement, regardless of which major lab they’re produced by. For example, take a look at the performance of the top 3 labs on the GPQA Diamond benchmark over the past few years--it’s almost impossible to tell them apart.

Wikipedia defines a commodity as a case where “the market treats instances of the good as equivalent... with no regard to who produced them”. The fact that it is so easy for users to switch, and that each provider’s capabilities are advancing at roughly the same rate, both indicate that AI inference today is a commodity.

As users, we want a system that answers our question correctly and that generates useful text. Most people don’t care about precisely which logo was associated with the GPUs that produced the output.

Thinking about intelligence as a commodity has some fascinating implications for the future:

We should expect the price to fall dramatically as production becomes more standardized and efficient. This is what typically happens for commodities (see e.g. Wright’s Law)

We should start thinking in terms of “how much” intelligence we need for a given task, and how we might quantify the “mental effort” (i.e., compute) required to accomplish a particular goal.

We should plan for a world where we can simply ‘buy’ more intelligence when it is needed, rather than going through a lengthy process of vetting and hiring to get any non-trivial job done.

We can better separate situations where we just need raw intelligence (pure “goal accomplishing mental effort”) from situations where we also need properties that currently cannot be as easily supplied by AI systems today (properties like intuition, judgment, taste, creativity, and accountability).

We should expect the returns on capital invested to be fairly modest. Commodities like steel or corn (and now intelligence), when faced with competition, will drive profits toward near-zero over time.

We’re likely to see AI agents become increasingly capable of doing most of today’s knowledge work, and likely over a fairly short time period (the next ~decade).

If current trends of improvement in AI capabilities hold for even a few more years, we will have AI systems capable of doing a large fraction of the mental labor performed today. We will have systems capable of answering questions, performing basic online research, gathering information, and assembling it into both documents and decisions.

This isn’t to say that there is only “one type of intelligence” that will excel at everything. But as intelligence becomes more widely available, we can increasingly tailor it to specific goals or domains. In that sense, some forms of intelligence may start to resemble a commodity like steel: it comes in many different forms (bars, rods, ore, etc.), that can be shaped and applied for different uses.

AI system performance largely boils down to the quantity and quality of its components: how many GPUs, parameters, and training data of what level of quality was used in its construction. Some day soon, the quality of an AI system may be considered more like the purity of steel.

But perhaps one of the most important implications is how it changes our collective relationship to intelligence. That brings us to the normative claim.

Treating intelligence as a commodity will be better for humanity

Not only does it seem like intelligence is likely to become a commodity, this is also a belief worth promoting and spreading.

There are several reasons for this.

First, if we view intelligence as a commodity, then AI companies are relegated to being providers of a basic service. If they’re just making an interchangeable commodity, then they’re welcome to compete on driving down the price for that, and we ought to encourage abstraction over any one provider.

In the same way I don’t want to be stuck with Comcast as my only option for internet, I don’t want to rely on a single AI company for my intelligence. We want to play these companies against each other, to avoid lock-in, and treat them like the commodities that they are. Over time, this will drive the price down as they are forced to compete by making their models ever more efficient and capable. This is capitalism at its best, but only if we can avoid engaging with AI inference as anything but a commodity.

This naturally leads to asking more of the right questions, like “what grade [how good] is this intelligence” and “how likely is this AI system to actually accomplish my goal [to a given level of quality]?” One of the most important aspects of a commodity is that it is of a known, well-understood level of quality.

Just as steel purity is graded by precise specifications (food-safe, tolerance for defect, etc.), so too should there be defined levels of intelligence.We want to measure and quantify these systems more precisely so we can ensure that we receive the level of quality we expect. This means spending more time and effort on evaluations, and on deeply understanding the flaws and defects in these systems, and precisely how often they occur. It’s not magic, any more than testing the strength of a steel beam.

Another benefit of viewing intelligence as a commodity is that it is quite equalizing for most of us. In a world where intelligence is something that can be tapped into and applied to any problem as easily as turning on a faucet, there’s no longer an implicit hierarchy in society where “experts” get to tell the rest of us how the world works or what to think.

When anyone can spin up an AI PhD to investigate a question, we will have a greater capacity to investigate the questions we’re curious and passionate about. When we know the quality level of that AI PhD--i.e., how likely it is to create a correct, truthful response for a given question--then we can start to determine how much we trust those answers. There will still be inequality, but less than before--when everyone has access to the same level of intelligence, then anyone can use AI to accomplish the goals they care about.

There are good reasons to believe, rationally, that intelligence is most accurately viewed as a commodity. But, more importantly, the world will be better if act like intelligence is a commodity. Rather than elevating these AI labs and being so impressed at their every new release, we should start accepting that this is just a new type of steel that enables us to build new types of structures.

Once we accept that AI companies are approximately as interesting as steel manufacturers, perhaps then we can start talking about the more interesting questions: given this new type of material, what do we want to build? Do we want to make multi-story parking lots, or do we want to build cathedrals?

The best definition I've encountered for intelligence is from Shane Legg and Marcus Hutter in their paper "Universal Intelligence: A Definition of Machine Intelligence". They define intelligence as: "an agent’s ability to achieve goals in a wide range of environments."